Robotic Process Automation Optimizes Test Instrumentation

By Andrew Herrera, Keysight Technologies

Product innovation is stoking the demand for new features and driving up the cost of research and development (R&D). Electronics companies spend a lot of money on components, labor, and testing as part of the R&D process. Testing spans every phase of R&D. When incorporating a new design element into a product, manufacturers must test the element for performance. If they replace a small component with a lower-cost or higher-performance component, they must test again.

This applies not only to components but also to software. As many products are software controlled, performance is based on the allowed outputs of the design measured by software. In wireless solutions, this can be an extra step in R&D. To ensure they are not exceeding restrictions set by wireless standards, there are often many repetitive tests to perform.

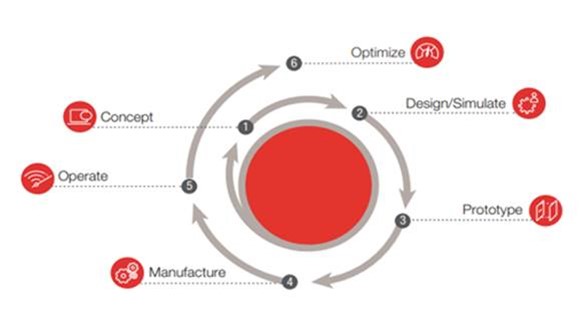

Design, testing, and verification are time-consuming, repetitive, and costly. Design validation engineers must ensure their solution designs perform well under demanding environments while minimizing manufacturing costs and verification stages. As you can see from Figure 1, this is one type of product life cycle development. Each step requires testing, debugging, and verification.

Figure 1. Example of the product life cycle development

Many applications use automation to increase efficiency. Robotic process automation (RPA) can help reduce the repetitive and manual labor involved in testing, such as clicking on software or swapping out test instruments. RPA speeds up hardware validation by allowing engineers to work on other projects or tasks during repetitive testing. Before evaluating whether RPA saves design validation engineers time, we must first understand the tests and associated costs.

Time And Cost For Hardware Verification Tests

When it comes to the time and cost required for testing designs, there are numerous repetitive tasks:

- testing hardware under conditions to simulate real-world environments

- verifying hardware to ensure it conforms with specifications, user expectations, and local environmental regulations

- debugging hardware to ensure it will perform as expected under normal and abnormal conditions

- ensuring that appropriate security measures are in place and that they perform to appropriate safety and security standards

The time and cost of each test vary depending on the project or task at hand. Assume three hours per test as an example. Using the four examples above, that would be 12 hours of engineer time spent on four tests. This estimate assumes that engineers performed all steps correctly, every measurement came out as expected, and there were no errors during instrumentation changes and adjustments. If not, a test can go from three hours to four or five. Automating an engineer’s repetitive processes can save a lot of time.

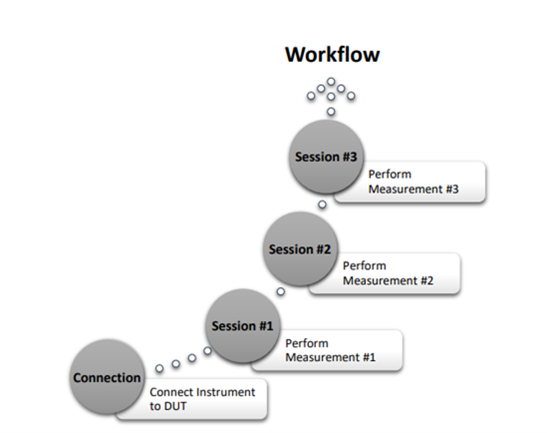

Validation engineers perform each measurement in a configured environment, then stop and set up the next environment and perform the same tests again. They repeat this process until they have tested each scenario. Figure 2 shows a workflow example of hardware verification on a device under test. Throughout the process, the engineers need to switch out the test instruments, change probes and hardware, and adjust settings. Not every test uses the same instruments or software and changing those elements can delay testing.

Figure 2. Workflow example of hardware verification on a device under test

No test environment is the same, there are multiple manufacture test instrumentation on an engineer’s test bench. Which can cause issues in testing due to unsupported software or instrumentation. In cases like this, it leads to more time per task due to instrumentation switching or time lost on having to order new instrumentation that is compatible, along with cost.

Expanding to 10 or 20 tasks per configuration can delay your project and drive up the costs. We begin to see the actual cost of development and verification testing in R&D as we dig deeper through each task, but we see a common cost issue in every test: time.

Save Time On Hardware Verification Testing

Every business owner knows that when a project or task takes a lot of time, that means more money spent. However, rushing or cutting corners in engineering hardware verification could lead to catastrophic failures. Therefore, the best way to reduce the amount of time spent on each task is to complete the task more efficiently. High-volume test environments demand efficiency. Measurement steps must focus on execution speed. To optimize tests, developers need control over everything. Test engineers are familiar with several barriers to optimization:

- Instrumentation solutions may come from more than one manufacturer.

- Preconfigured analysis routines often lack speed and flexibility.

- Test software applications carry more measurements than needed.

- Equipment utilization with parallel analysis can be complex and inefficient.

Every step of testing carries a risk of human error. Within the barriers of optimization, there is a risk of errors during instrument changes or between measurements when inputs are incorrect. Doing a lot of tedious, repetitive tasks can cause engineers to overlook small procedures. These seemingly minor issues can create significant errors in testing verification, leading to a large risk of human error.

When these errors occur, engineers spend more time redoing tests, which means more development costs and more time spent doing R&D. RPA can help accelerate debugging and validation while minimizing the risk of human error. Automation removes the risk of overlooking small adjustments because it carries out every step, no matter how tedious.

Let’s evaluate RPA in a test instrumentation world. RPA must enable the configuration and building of complex workflows via parameterization. If automation requires engineers to enable changes, it is not efficient. With that in mind, if an engineer is not nearby, the software automation needs to enable remote access so someone can check on the test status and enable any parameter changes. Using test instrumentation from more than one manufacturer adds another automation challenge and a barrier to optimization.

Finally, all these requirements are challenging on their own, but if an engineer must write all the code to enable this process, that adds more cost, time, and inefficiency. Thus, automation software should allow for recording, playback, and sharing of the automation test, all without manually written software code.

Engineers take many hours to perform different measurements during one test. RPA can help them conduct repetitive tests by changing instrumentation measurement parameters or switching between software applications to take different measurements. This increases performance and efficiency, enabling test engineers to focus on their next project. Those 12 hours on four tasks can become 12 hours for eight tasks by cutting test times in half and getting projects completed on time or even early. In manufacturing, better use of test time provides a better return on investment.

RPA Is Ideal For Test Instrumentation

In addition to increasing efficiency in testing tasks, automation enables better performance from employees. The many hours spent on repetitive tasks create stress and affect performance. As automation enables better performance and results, engineers can work on multiple projects without the fear of delays or missing performance goals.

Incorporating RPA into your test instrument environment can help increase innovation through hardware verification and testing and improve the productivity of innovation through employee satisfaction. RPA is not something to fear or avoid when using test instrumentation but to embrace and incorporate for repetitive testing tasks. RPA can enable us to save time, which is a resource we cannot get back.

About The Author

Andrew Herrera is an experienced product marketer in radio frequency and Internet of Things’ solutions. Andrew is the product marketing manager for RF test software at Keysight Technologies, leading Keysight’s PathWave 89600 vector signal analyzer, signal generation, and X-Series signal analyzer measurement applications. Andrew also leads the automation test solutions such as Keysight PathWave Measurements and PathWave Instrument Robotic Process Automation (RPA) software.